rm(list=ls())

# Get current date

today <- Sys.Date()

print(today)[1] "2025-02-28"# Creating a Date object

specific_date <- as.Date("2023-12-11")

print(specific_date)[1] "2023-12-11"Base R uses two main classes to handle date and time data:

Date: for dates (year, month, day).

POSIXct and POSIXlt: for date-time (date plus time of day).

In computer programming, a “class” is a blueprint or template for creating objects, providing initial values for state (member variables or attributes) and implementations of behavior (member functions or methods).

Classes encapsulate data for the objects created from them, enabling the principle of data abstraction and encapsulation. This concept allows for the creation of complex data structures that can model real-world entities or abstract concepts, facilitating object-oriented programming (OOP).

Classes define the properties and functionalities that their instances (objects) will have, allowing for code reuse and modularity.

Let’s start by understanding how to work with these two classes.

Date classThe Date class is simplest, and is used to handle dates without time.

rm(list=ls())

# Get current date

today <- Sys.Date()

print(today)[1] "2025-02-28"# Creating a Date object

specific_date <- as.Date("2023-12-11")

print(specific_date)[1] "2023-12-11"POSIXct and POSIXlt are used for data that includes both date and time information.

POSIXct represents the (date-time) as the number of seconds since the beginning of 1970 (known as the Unix epoch), whereas POSIXlt is a list that contains detailed information about the date-time.

Note that Excel uses a different time-base for its date and time serial information. 693,960.

# Current date-time

now <- Sys.time()

print(now)[1] "2025-02-28 10:54:02 GMT"# Creating a POSIXct object

specific_datetime <- as.POSIXct("2023-12-11 20:59:59")

print(specific_datetime)[1] "2023-12-11 20:59:59 GMT"Using as.Date(), you can convert character strings to Date objects. In this example, I’ve specified the format in which the date information is presented (“%Y-%m-%d”):

# Convert a character string (2023-12-11) to a Date

date_from_string <- as.Date("2023-12-11", format="%Y-%m-%d")

print(date_from_string)[1] "2023-12-11"The important point to remember is that R now understands that this object represents time-based information, rather than just a string of characters. It’s similar to how we defined certain strings as factors in previous sections of the module.

Using as.POSIXct(), we can also work with date-time strings.

# Convert a character string to POSIXct

datetime_from_string <- as.POSIXct("2022-01-01 12:00:00", format="%Y-%m-%d %H:%M:%S")

print(datetime_from_string)[1] "2022-01-01 12:00:00 GMT"Dates and times can come in various formats. I’ve lost track of the number of different ways in which I’ve seen date and time-based information represented in sport-related datasets.

Regardless of the format in which we recieve the data, it’s crucial to match the format in the as.Date() or as.POSIXct()functions.

# Different date formats

date_euro_format <- as.Date("01/02/2022", format="%d/%m/%Y") # Day/Month/Year

print(date_euro_format)[1] "2022-02-01"# Time in 12-hour format

datetime_12hr <- as.POSIXct("01/02/2022 01:30:00 PM", format="%d/%m/%Y %I:%M:%S %p")

print(datetime_12hr)[1] "2022-02-01 13:30:00 GMT"In the following code, I’ll create an example dataset that contains the type of ‘messy’ date and time information we often find in sport data:

# Dataset with date and time in different formats

date_time_data <- data.frame(

date_string = c("2023-12-19", "19-Dec-2023", "12/19/2023", "20231219",

"2023/12/19 14:20", "19-Dec-2023 14:20", "12/19/2023 14:20", "202312191420"),

format = c("YYYY-MM-DD", "DD-MMM-YYYY", "MM/DD/YYYY", "YYYYMMDD",

"YYYY/MM/DD HH:MM", "DD-MMM-YYYY HH:MM", "MM/DD/YYYY HH:MM", "YYYYMMDDHHMM")

)

# Show original dataset

print("Original Dataset with Various Date Formats")[1] "Original Dataset with Various Date Formats"print(date_time_data) date_string format

1 2023-12-19 YYYY-MM-DD

2 19-Dec-2023 DD-MMM-YYYY

3 12/19/2023 MM/DD/YYYY

4 20231219 YYYYMMDD

5 2023/12/19 14:20 YYYY/MM/DD HH:MM

6 19-Dec-2023 14:20 DD-MMM-YYYY HH:MM

7 12/19/2023 14:20 MM/DD/YYYY HH:MM

8 202312191420 YYYYMMDDHHMMNow, I can use the same process to convert that information to a format that R will understand. Note that I have to tell R what the format of my original data is:

# Convert date strings to Date objects using as.Date()

date_time_data$date_as_date <- c(

as.Date(date_time_data$date_string[1], format = "%Y-%m-%d"),

as.Date(date_time_data$date_string[2], format = "%d-%b-%Y"),

as.Date(date_time_data$date_string[3], format = "%m/%d/%Y"),

as.Date(date_time_data$date_string[4], format = "%Y%m%d"),

as.Date(date_time_data$date_string[5], format = "%Y/%m/%d"),

as.Date(date_time_data$date_string[6], format = "%d-%b-%Y"),

as.Date(date_time_data$date_string[7], format = "%m/%d/%Y"),

as.Date(date_time_data$date_string[8], format = "%Y%m%d")

)

# Convert date strings to POSIXct datetime objects using as.POSIXct()

date_time_data$datetime_as_posix <- c(

as.POSIXct(date_time_data$date_string[1], format = "%Y-%m-%d"),

as.POSIXct(date_time_data$date_string[2], format = "%d-%b-%Y"),

as.POSIXct(date_time_data$date_string[3], format = "%m/%d/%Y"),

as.POSIXct(date_time_data$date_string[4], format = "%Y%m%d"),

as.POSIXct(date_time_data$date_string[5], format = "%Y/%m/%d %H:%M"),

as.POSIXct(date_time_data$date_string[6], format = "%d-%b-%Y %H:%M"),

as.POSIXct(date_time_data$date_string[7], format = "%m/%d/%Y %H:%M"),

as.POSIXct(date_time_data$date_string[8], format = "%Y%m%d%H%M")

)

# Show the dataset with converted date and datetime columns

print("Dataset with Converted Date and DateTime Columns")[1] "Dataset with Converted Date and DateTime Columns"print(date_time_data) date_string format date_as_date datetime_as_posix

1 2023-12-19 YYYY-MM-DD 2023-12-19 2023-12-19 00:00:00

2 19-Dec-2023 DD-MMM-YYYY 2023-12-19 2023-12-19 00:00:00

3 12/19/2023 MM/DD/YYYY 2023-12-19 2023-12-19 00:00:00

4 20231219 YYYYMMDD 2023-12-19 2023-12-19 00:00:00

5 2023/12/19 14:20 YYYY/MM/DD HH:MM 2023-12-19 2023-12-19 14:20:00

6 19-Dec-2023 14:20 DD-MMM-YYYY HH:MM 2023-12-19 2023-12-19 14:20:00

7 12/19/2023 14:20 MM/DD/YYYY HH:MM 2023-12-19 2023-12-19 14:20:00

8 202312191420 YYYYMMDDHHMM 2023-12-19 2023-12-19 14:20:00Sometimes you might want to extract the year, month, day etc., from an existing variable.

This can be done as follows:

specific_datetime <- as.POSIXct("2023-12-11 20:59:59")

# Extracting components

year <- format(specific_datetime, "%Y")

month <- format(specific_datetime, "%m")

day <- format(specific_datetime, "%d")

hour <- format(specific_datetime, "%H")

minutes <- format(specific_datetime, "%M")

seconds <- format(specific_datetime, "%S")

print(paste("Year:", year, "- Month:", month, "- Day:", day, "- Hour:", hour, "- Minutes:", minutes, "- Seconds:", seconds))[1] "Year: 2023 - Month: 12 - Day: 11 - Hour: 20 - Minutes: 59 - Seconds: 59"For further analysis, we might wish to modify or extract elements from our time-based data.

Some examples include:

# Adding days to a date

future_date <- specific_date + 30

print(future_date)[1] "2024-01-10"# Subtracting time from a datetime

past_datetime <- specific_datetime - as.difftime(1, units="hours")

print(past_datetime)[1] "2023-12-11 19:59:59 GMT"# Difference in days

date_diff <- as.Date("2022-02-01") - as.Date("2022-01-01")

print(date_diff)Time difference of 31 days# Difference in seconds

time_diff <- as.POSIXct("2022-01-01 13:00:00") - as.POSIXct("2022-01-01 12:00:00")

print(as.numeric(time_diff, units="secs"))[1] 3600Handling time zones in POSIXct is a critical aspect of date-time manipulation.

This is particularly important if you’re working with data gathered from different countries.

# Creating a POSIXct object with a specific time zone

datetime_ny <- as.POSIXct("2023-01-01 12:00:00", tz="America/New_York")

datetime_london <- as.POSIXct("2023-01-01 12:00:00", tz="Europe/London")

# Comparing times

print(datetime_ny)[1] "2023-01-01 12:00:00 EST"print(datetime_london)[1] "2023-01-01 12:00:00 GMT"lubridate packageSo far, we’ve focused on the two time-based functions that come with base R. Now, we will add the lubridate package to our toolkit.

The lubridate package is a powerful and user-friendly tool designed to simplify the handling and manipulation of dates and times.

As part of the tidyverse, it provides a comprehensive set of functions that make it easier to perform common tasks such as parsing, manipulating, and doing arithmetic with date-time objects.

lubridate addresses the complexity of date-time data types by offering functions that intuitively deal with time zones, daylight saving times, and various date-time formats.

It’s important to note that its functionality is built around three main date-time classes: dates, times (POSIXct and POSIXlt), and durations, intervals, or periods.

library(lubridate)

Attaching package: 'lubridate'The following objects are masked from 'package:base':

date, intersect, setdiff, union# Easy parsing of dates

ymd("20220101")[1] "2022-01-01"mdy("01/02/2022")[1] "2022-01-02"dmy("02-01-2022")[1] "2022-01-02"# Arithmetic with lubridate

date1 <- ymd("2022-01-01")

date1 %m+% months(1) # Add a month[1] "2022-02-01"date1 %m-% months(1) # Subtract a month[1] "2021-12-01"# Extracting components

year(date1)[1] 2022month(date1)[1] 1day(date1)[1] 1# Rounding off date and time to the nearest day, hour, etc.

round_date(datetime_ny, unit="day")[1] "2023-01-02 EST"floor_date(datetime_ny, unit="hour")[1] "2023-01-01 12:00:00 EST"ceiling_date(datetime_ny, unit="minute")[1] "2023-01-01 12:00:00 EST"# Dealing with duration and period - understanding the difference between duration (exact time spans) and period (human-readable time spans).

# Duration: exact time spans

duration_one_day <- ddays(1)

duration_one_hour <- dhours(1)

datetime_ny + duration_one_day[1] "2023-01-02 12:00:00 EST"# Period: human-readable time spans

period_one_month <- months(1)

date1 + period_one_month[1] "2022-02-01"# Handling Daylight Saving Time Dealing with complexities due to changes in daylight saving time.

# Before daylight saving time

dt1 <- as.POSIXct("2022-03-13 01:59:59", tz="America/New_York")

# After daylight saving time

dt2 <- dt1 + dhours(1)

print(dt1)[1] "2022-03-13 01:59:59 EST"print(dt2)[1] "2022-03-13 03:59:59 EDT"First, I’ll load some libraries.

rm(list=ls()) # clear environment

# Load necessary libraries

library(ggplot2)

library(forecast)

library(tseries)For this demonstration I’m going to create an artificial dataset with two variables: Week and Speed. This represents the average speed a runner has achieved during training each week for a full year.

# Average running speed of an athlete over 52 weeks

set.seed(123) # For reproducibility

weeks <- 1:52

speed <- round(10 + rnorm(52, mean = 0, sd = 0.4),2) # Average speed with some random variation

sports_data <- data.frame(Week = weeks, Speed = speed)

rm(speed)

rm(weeks)

head(sports_data) Week Speed

1 1 9.78

2 2 9.91

3 3 10.62

4 4 10.03

5 5 10.05

6 6 10.69So we have one observation (Speed) for each Week that has been observed.

We can say this is a time series because it a regular capture of information on a consistent time frame.

In order to take advantage of the specific features of TSA, we need to tell R that the data is actually in the form of a time series.

So, we convert the dataframe sports_data to a time series format.

If we don’t do this, R won’t know that it’s a time series, and we will not be able to use specialised TSA functions to examine the important features of such data (such as seasonality, or periodicity).

This is critical, and worth taking some time to examine.

In a previous section, we introduced the ts command, which lets us tell R our data is a time series.

We’ll stick with the simplest form for now, which is ts(data, frequency). If you want to go further into this, you’ll find that you can create start and end dates as well.

In this code, data represents the numerical data you want to convert into a time series. In our case, it’s the Speed variable.

The frequency is number of observations per unit of time. For example, 12 for monthly data in a year, 4 for quarterly data, etc.

It’s important to understand how this works: we’re telling R how many observations we have for one year.

In our case, the data is weekly, so we enter 52 as frequency (and sports_data$Speed as our data).

# Convert to time series using the ts function

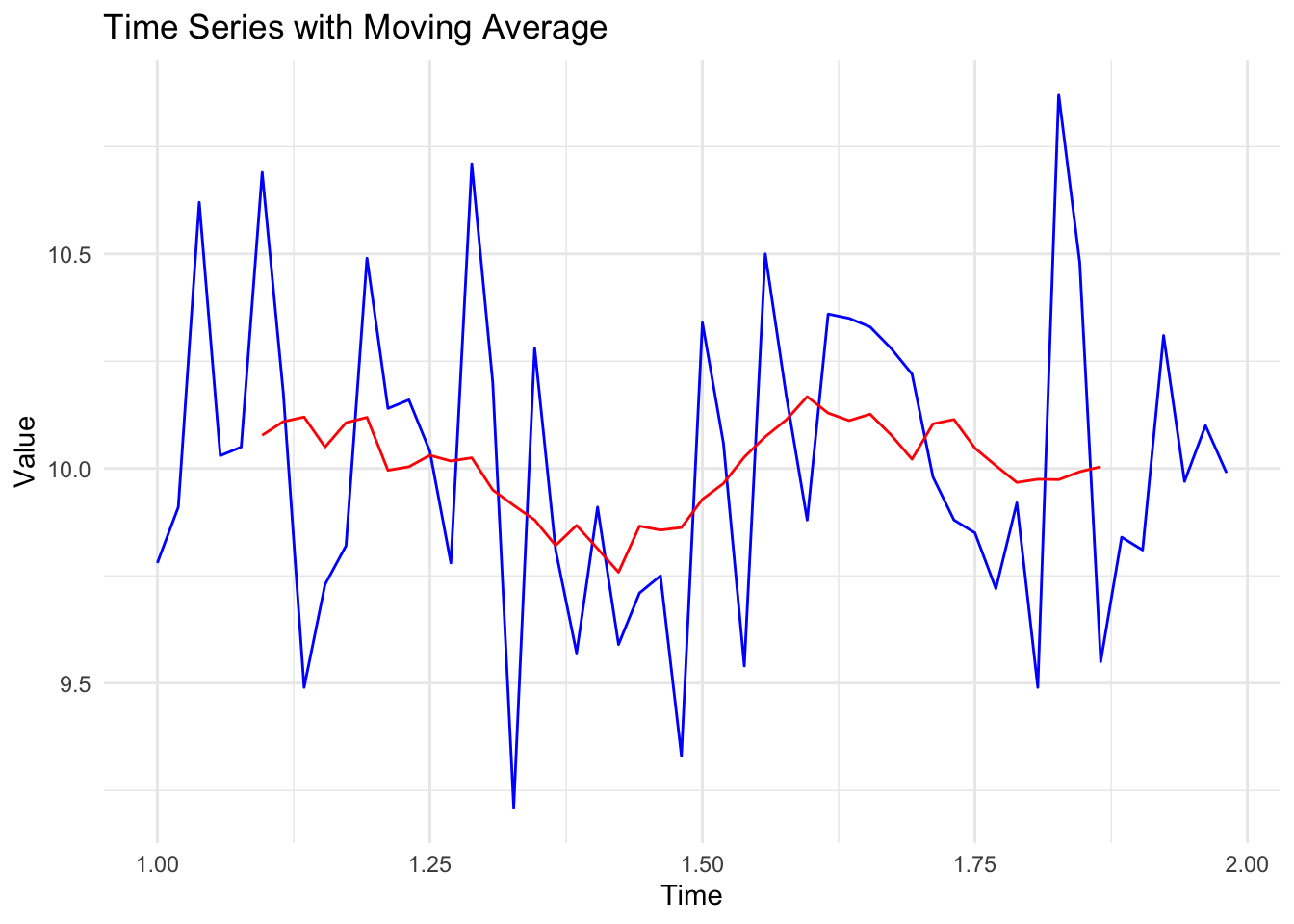

ts_data <- ts(sports_data$Speed, frequency = 52)We can now plot this time series as follows. Note that I’ve added a simple moving average in red to help simplify the data. Also, notice that R has understood that each observation represents a weekly time period (with week one = 1.0, and week 52 = 1.9).

library(ggplot2)

library(zoo)

Attaching package: 'zoo'The following objects are masked from 'package:base':

as.Date, as.Date.numeric# Convert time series to data frame

ts_df <- data.frame(Time = time(ts_data), Value = as.vector(ts_data))

# Calculate the moving average

ts_df$MovingAvg <- rollmean(ts_df$Value, k = 12, fill = NA, align = "center")

# Plot using ggplot2

ggplot(ts_df, aes(x = Time)) +

geom_line(aes(y = Value), color = "blue") + # Original time series

geom_line(aes(y = MovingAvg), color = "red") + # Moving average

labs(x = "Time", y = "Value", title = "Time Series with Moving Average") +

theme_minimal()Don't know how to automatically pick scale for object of type <ts>. Defaulting

to continuous.Warning: Removed 11 rows containing missing values or values outside the scale range

(`geom_line()`).

When creating time series data in R, I tend to think of the ‘big period’ and the ‘little period’. In this case, big period was a year, and little period was a week.

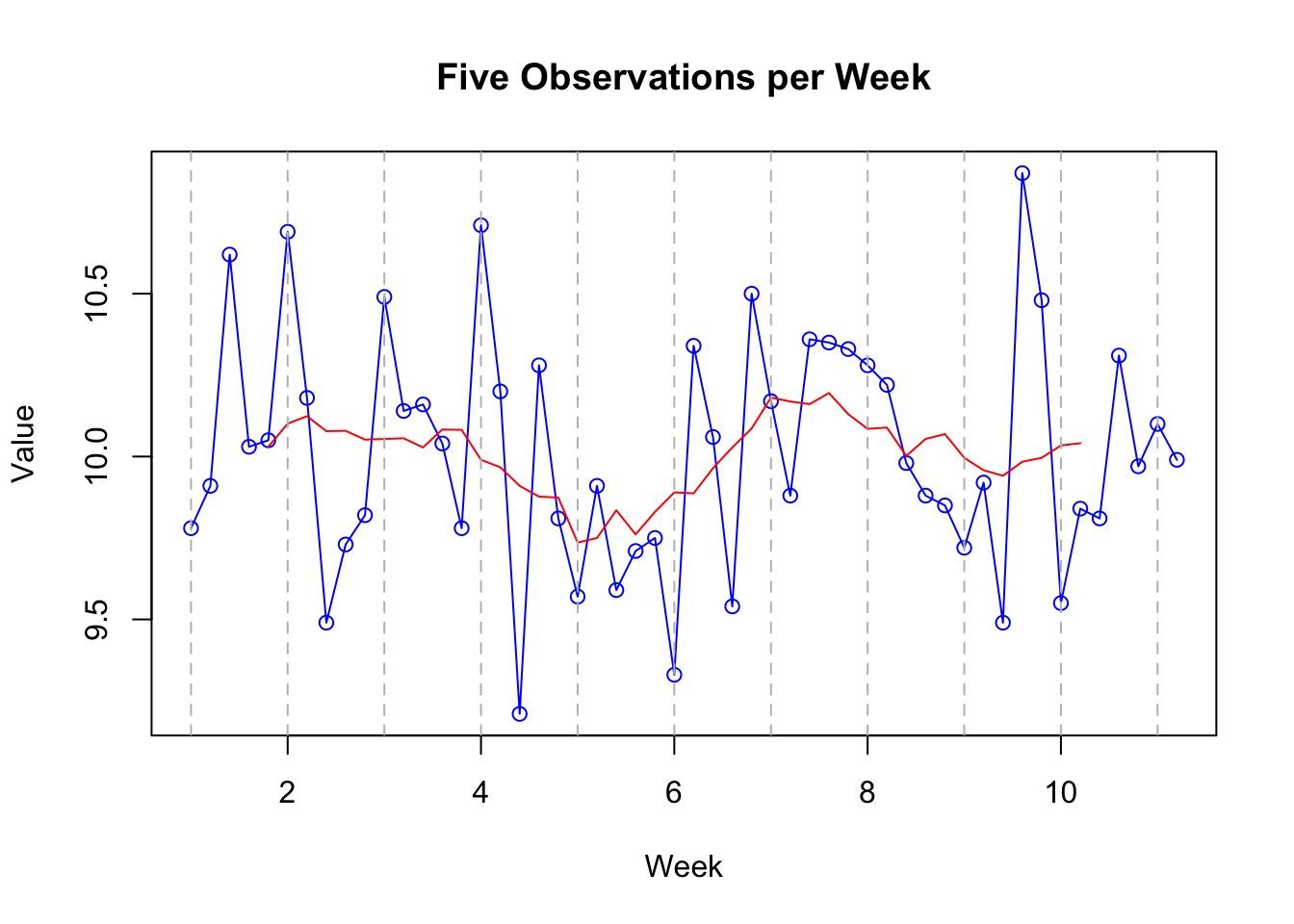

Imagine our data had been collected differently. Let’s say we had collected 52 observations, but instead of one per week, we’d collected five per week.

In this case, our ‘big period’ would be the week, and our ‘little period’ how many observations we have per week (5).

We’d create our time-series object like this:

# Convert to time series

ts_data_02 <- ts(sports_data$Speed, frequency = 5)In this case, we’ve told our object that there are 5 observations per time period (i.e., 5 observations per week, or ‘big period’). Remember: our object doesn’t care what the period is…it just needs to know how many observations are in each period.

A plot of that data would look like this:

# Plotting the time series with 'Week' on the x-axis

plot(ts_data_02, type = "o", col = "blue", main = "Five Observations per Week", xlab = "Week", ylab = "Value")

# Apply a simple moving average

# Calculate the moving average over a window of 12 periods

moving_avg <- rollmean(ts_data_02, k = 10, fill = NA, align = "center")

lines(moving_avg, col="red")

# for clarity, I've added a vertical line at each week

for(i in seq(1, length(ts_data_02), by = 1)) {

abline(v = i, col = "gray", lty = 2)

}

Note that it’s exactly the same! The only thing that’s changed is that we’ve specified our final time point as being 12 rather than 52, and that there are 5 observations per time period rather than 52.

This highlights the importance of having equal numbers of observations per time period. It wouldn’t work (well it could but we’re keeping it simple) if you had three observations one week, and six the next.

So we have covered the idea of creating a time-series dataset that can now be explored using specific techniques designed for such data.

As noted earlier, one of the main things we’re interested in when dealing with time-series data are trends and seasonality in the data.

Trend: in time series data, a ‘trend’ is the overall direction in which the data is moving over time. It can go up, down, or stay relatively flat.

Seasonality: ‘Seasonality’ refers to regular, predictable changes that occur in time series data at specific periods, much like the way ice cream sales increase in summer and decrease in winter. Note that the word ‘seasonal’ has nothing to do with the seasons of the year. The season is the technical term for the ‘big period’.

We can use the stl function to examine trends and seasonality.

Caveat. I am going to complicate this slightly by saying that this analysis requires more than one season (‘big period’). If you remember, our first dataset

ts_datahad a season of one year. But we only had one season (year) of data. Our second datasetts_data_02had a season of a week, and we had 10 of those. So we are going to use that dataset for this example.

# Time Series Decomposition

decomposed <- stl(ts_data_02, s.window = "periodic")

plot(decomposed)

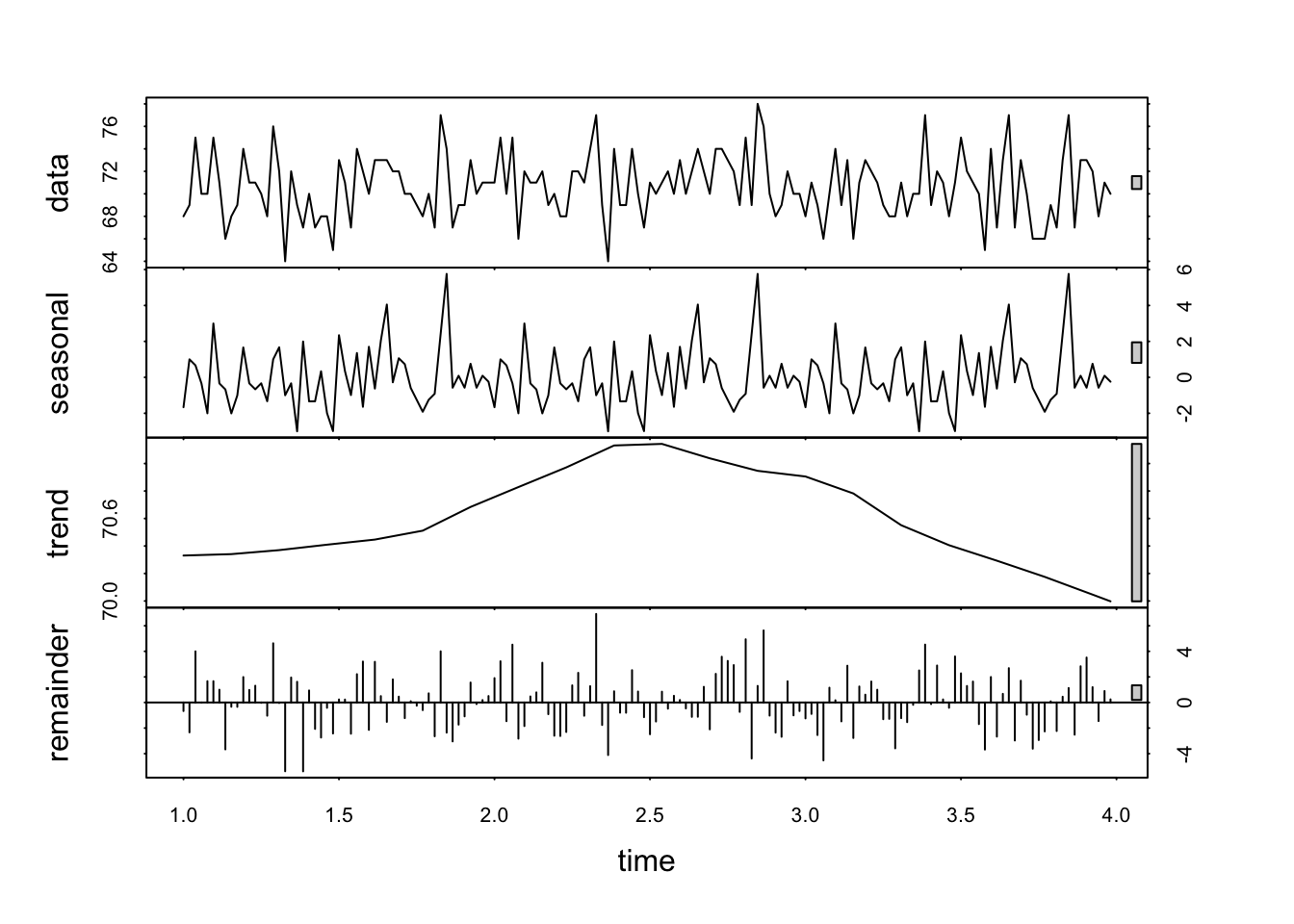

The resulting plot has four panels, each representing a different component of the time series. Because R understands that this data is in the form of a time-series, it can examine some specific features of such data.

At first glance it looks a bit complicated. Here’s how to interpret each of these outputs:

Data: this is the observed data. The plot is the same as those above.

Seasonal component: isolates and shows the seasonal pattern within the data. Look for regular, repeating patterns that occur at fixed intervals. For example, in weekly data, you might see a pattern that repeats every particular day (as is the case here). The amplitude (height) of the seasonal component gives an indication of the strength of the seasonality. Larger amplitudes mean stronger seasonal effects.

Trend component: represents the long-term progression or movement of the data, stripping away the seasonal effects and irregular fluctuations. It shows how the data’s central tendency changes over time. An upward trend indicates an increase over time, a downward trend indicates a decrease, and a flat trend indicates stability.

Remainder (Irregular or Residual Component): shows what’s left after the seasonal and trend components have been removed from the data. It represents the noise or random fluctuation that can’t be attributed to the seasonality or trend. Ideally , in a well-decomposed series, this component should appear as random noise, without any discernible pattern. If there are patterns in the remainder, it may suggest that the seasonal or trend components have not fully captured all the systematic information in the data.

What can you observe in the plots above, especially those relating to seasonality and trend?

Based on the steps outlined above, conduct a basic time series analysis for the dataset df that is available here:

df <- read.csv('https://www.dropbox.com/scl/fi/nh221nxwv0fp0fy773i2j/golf_data.csv?rlkey=666lxnhwazjpg70j9wgowog8x&dl=1')

df <- df[1:156,]

df$Date <- NULL

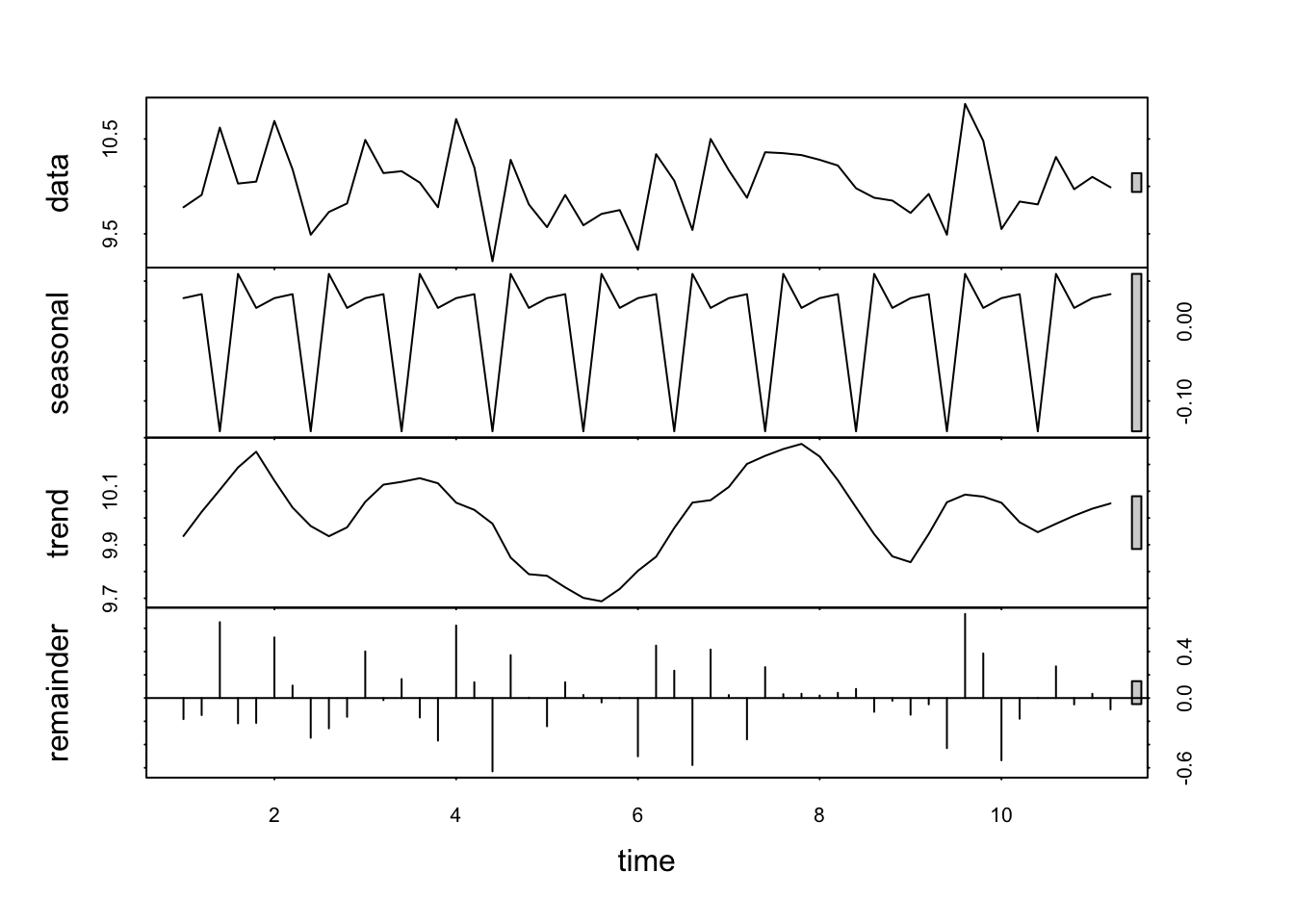

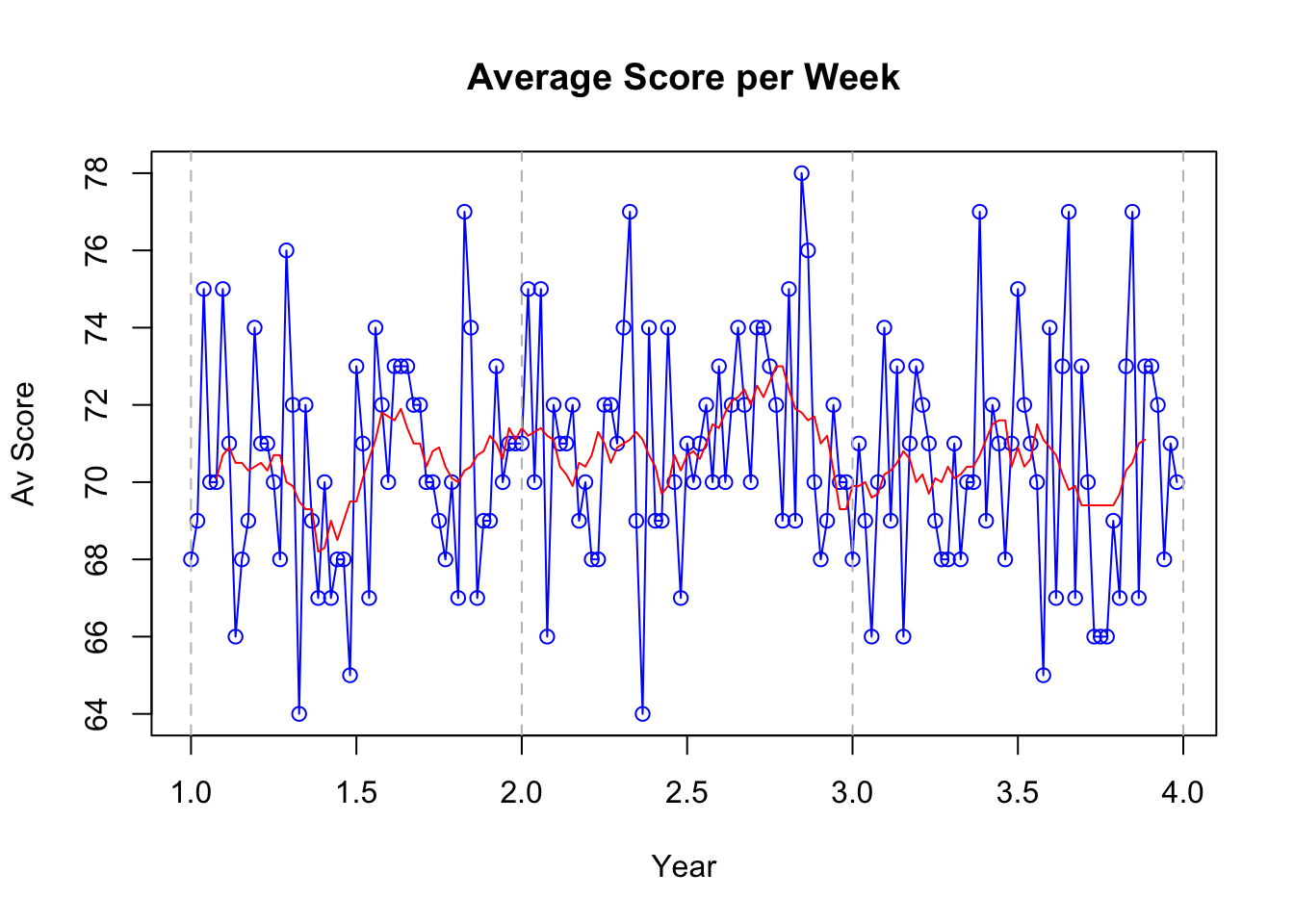

df$Score <- round(df$Score,0)The dataset contains data gathered from a golfer who has recorded their average score for per week over a period of three years.

Notes:

Create an appropriate time-series object for the data.

Don’t ignore descriptive statistics and exploratory data analysis - these should be your first stages regardless of what you’re going to do next.

Focus on interpreting visual plots, as well as statistical output.

Be ready to report your findings regarding seasonality in the data.

Crucially, did the golfer perform better in certain seasons than others?

What was the overall trend in their performance over time?

# Convert to time series

golf_data <- ts(df$Score, frequency = 52)

# Plotting time series

plot(golf_data, type = "o", col = "blue", main = "Average Score per Week", xlab = "Year", ylab = "Av Score")

# Apply a simple moving average

# Calculate the moving average over a window of 12 periods

moving_avg <- rollmean(golf_data, k = 10, fill = NA, align = "center")

lines(moving_avg, col="red")

# for clarity, I've added a vertical line at each year

for(i in seq(1, length(golf_data), by = 1)) {

abline(v = i, col = "gray", lty = 2)

}

# Time Series Decomposition

decomposed <- stl(golf_data, s.window = "periodic")

plot(decomposed)